Is AI Bad?

Recently, several companies that work with developing Artificial Intelligence (AI) sent an open letter to the UN. The letter was to voice concerns over the use of AI in weapons. These companies are concerned that AI will be used to develop weapons that will act independently of humans. They call it the “third revolution in warfare” which will “permit armed conflict to be fought at a scale greater than ever, and at timescales faster than humans can comprehend.”

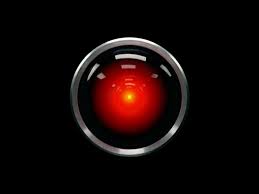

This is scary stuff, but it shouldn’t be a surprise to anyone that this is a possibility. Especially if you have read or watched much science fiction over the last few decades. Most people would probably point to the Terminator movie franchise as an example where Skynet takes over the world from humans. There are other examples, for me the first one that got me thinking about this topic was 2001: A Space Odyssey.

I’m Sorry Dave, I’m afraid I can’t do that.

HAL’s monotone voice only enhanced the creepy factor. There was no obvious malice involved HAL’s non-compliance, was there?

2001: A Space Odyssey came out in 1968. I remember watching it as a kid and thinking it was weird, but that voice has stayed with me since.

Over the years, there have been several movies that took on the AI question:

- Wargames (1983) – AI bad.

- Terminator (1984) – AI bad. (First movie bad, but mixed in later movies with “good” terminator)

- Maximum Overdrive (1986) – AI bad. (Of course this one is a bit campy, but it does have AC/DC music)

- The Matrix (1999) – AI mixed. (First movie bad, but got mixed up in later movies)

- AI: Artificial Intelligence (2001) – AI good.

- I Robot (2004) – AI mixed.

- Chappie (2015) – AI good.

The AI comments are mine (you may not agree). I’m sure there are other movies that I didn’t see, but there seems to be a trend where AI is becoming more acceptable, less scary (in the movies at least). What does this mean about the acceptance of AI in our society?

I’m with the letter writers. Yes, Artificial Intelligence is cool, but it is way too scary for me to be happy about it being developed. A couple of recent developments reinforce my concern:

- Facebook’s Artificial Intelligence Robots Shutdown after they start talking to each other in their own language.

- Google’s New AI Has Learned to Become “Highly Aggressive” in Stressful Situations

While human intelligence maybe somewhat of an oxymoron at times, I say let’s keep the robot programming simple, and let people think for themselves. Who’s with me?

Hey, what’s that button?